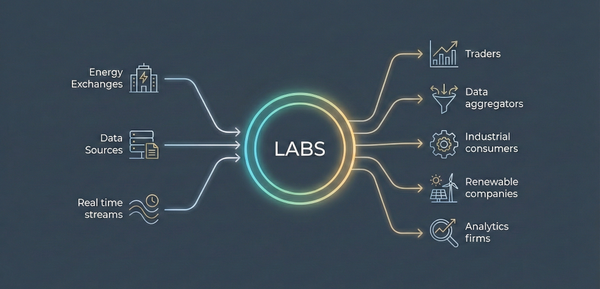

In our modern, data-centric world, data pipelines are essential for transporting, transforming, and storing data from various sources to destinations such as data warehouses, data lakes, or analytics tools. This article explores the fundamental components, design patterns, and best practices for constructing data pipelines. We'll also look at specific implementations using AWS, Azure, and Google Cloud, and spotlight popular tools and frameworks.

Understanding Data Pipeline Architecture

A well-designed data pipeline architecture ensures seamless data flow from source to destination. Here are the primary components:

Data Sources

Data originates from applications, databases, IoT devices, APIs, or files. These sources feed raw data into the pipeline. For example, a sales application may generate transactional data, while IoT devices might produce continuous streams of sensor data.

Data Ingestion

Tools like Apache Kafka and Apache NiFi handle the ingestion of both batch and streaming data. Kafka is particularly useful for real-time data streaming and can handle high-throughput use cases, while NiFi offers an easy-to-use interface for managing data flows.

Data Transformation

ETL (Extract, Transform, Load) processes transform data before loading it into storage, ensuring data quality and consistency. ELT (Extract, Load, Transform) processes load raw data first and then transform it within the storage system, leveraging the processing power of modern data warehouses and lakes.

ETL Example

A financial institution might use ETL to clean and aggregate transaction data before loading it into a data warehouse for compliance reporting.

ELT Example

An e-commerce platform might store raw clickstream data in a data lake and perform ad-hoc transformations as needed for marketing analytics.

Data Storage

Transformed data is stored in data lakes, data warehouses, or databases. Data lakes (e.g., AWS S3, Azure Data Lake) store raw and semi-structured data, while data warehouses (e.g., Amazon Redshift, Google BigQuery) store structured data optimized for analysis.

Data Consumption

End-users and applications access the data for analytics, reporting, machine learning, and business intelligence. Tools like Tableau, Power BI, and custom analytics applications are commonly used in this stage.

Data Pipeline Design Patterns

Data pipelines can follow different design patterns depending on the requirements:

Comparison Table: Batch vs Stream Processing

Data Pipeline Tools and Frameworks

Choosing the right tools and frameworks is crucial for building effective data pipelines.

-

ETL Tools: Apache NiFi, Apache Airflow, Talend.

-

Data Streaming Platforms: Apache Kafka, AWS Kinesis.

-

Cloud Services: AWS Glue, Azure Data Factory, Google Cloud Dataflow.

-

Open Source: Airbyte, Singer, and dbt are popular open-source tools for building data pipelines.

Popular Data Pipeline Tools

Data Pipeline Examples

Data Pipeline on AWS

AWS provides robust tools for building data pipelines, such as AWS Glue for data integration and transformation, and Amazon Redshift for data warehousing. AWS Glue offers a serverless environment for running ETL jobs, making it easier to discover, prepare, and combine data for analytics.

AWS Data Pipeline Example

-

Data Source: Transactional data from an e-commerce application.

-

Ingestion: AWS Kinesis for real-time data streaming.

-

Transformation: AWS Glue for data cleaning and aggregation.

-

Storage: Amazon Redshift for structured data warehousing.

-

Consumption: Tableau for business intelligence reporting.

Data Pipeline on Azure

Azure Data Factory is a powerful service for creating, scheduling, and orchestrating data pipelines. It integrates seamlessly with other Azure services like Azure SQL Database and Azure Synapse Analytics. Azure also provides extensive monitoring and management features to ensure the reliability and performance of data pipelines.

Azure Data Pipeline Example

-

Data Source: Sensor data from IoT devices.

-

Ingestion: Azure IoT Hub for data collection.

-

Transformation: Azure Data Factory for data preprocessing and enrichment.

-

Storage: Azure Data Lake for raw data, Azure Synapse Analytics for processed data.

-

Consumption: Power BI for real-time dashboards and analytics.

Data Pipeline on Google Cloud

Google Cloud offers Dataflow for real-time processing, BigQuery for data warehousing, and Cloud Storage for scalable storage solutions. Google Cloud's data pipeline services are designed to handle both batch and streaming data, providing flexibility and scalability for various use cases.

Google Cloud Data Pipeline Example

-

Data Source: Log data from web applications.

-

Ingestion: Google Pub/Sub for message ingestion.

-

Transformation: Google Dataflow for stream and batch processing.

-

Storage: Google BigQuery for analytics.

-

Consumption: Looker for data visualization and exploration.

Best Practices for Data Pipeline Automation and Security

Automation

Automate repetitive tasks to improve efficiency and reduce errors. Tools like Apache Airflow enable scheduling and monitoring of pipeline workflows, helping ensure timely and reliable data processing.

Example

Automating ETL workflows in Airflow to update a data warehouse with daily sales data.

Security

Implement robust security measures such as data encryption, access control, and monitoring to protect sensitive data. Ensure compliance with data governance policies to safeguard data integrity and privacy.

Example

Encrypting data at rest and in transit using AWS KMS and setting up IAM roles for access control.

Monitoring and Maintenance

Continuously monitor pipeline performance and set up alerts for failures. Regular maintenance is essential to keep the pipeline running smoothly. Tools like Datadog and Prometheus can provide comprehensive monitoring solutions.

Example

Using Prometheus to monitor Kafka consumer lag and set up alerts for delays.

Data Pipeline for Machine Learning

Data pipelines are critical for feeding clean, high-quality data into machine learning models. They handle data ingestion, transformation, and storage, ensuring that data scientists and machine learning engineers have the right data for training and inference. Effective data pipelines enable continuous integration and deployment of ML models, facilitating rapid experimentation and model updates.

Data Pipeline for Machine Learning Workflow

Here are the steps involved in a data pipeline for machine learning

-

Data Ingestion: Collect data from various sources.

-

Data Transformation: Clean and preprocess data.

-

Data Storage: Store transformed data in a data lake or warehouse.

-

Model Training: Use stored data for training machine learning models.

-

Model Deployment: Deploy trained models for inference.

Example: Machine Learning Data Pipeline for Predictive Maintenance

-

Data Source: Sensor data from manufacturing equipment.

-

Ingestion: Azure IoT Hub for real-time data collection.

-

Transformation: Azure Databricks for data cleaning and feature engineering.

-

Storage: Azure Data Lake for raw data, Azure Synapse Analytics for processed data.

-

Model Training: Azure Machine Learning for building predictive models.

-

Model Deployment: Azure Kubernetes Service (AKS) for serving the model.

ETL vs ELT

While ETL (Extract, Transform, Load) is a type of data pipeline specifically focused on extracting, transforming, and loading data, not all data pipelines follow this sequence. Some use ELT (Extract, Load, Transform), where data is first loaded into storage and then transformed as needed. The choice between ETL and ELT depends on the specific requirements of the data processing task and the capabilities of the storage systems used

Comparison Table: ETL vs ELT

Conclusion

Building effective data pipelines requires careful planning and the right tools. By understanding the architecture, design patterns, and best practices, you can create robust pipelines that meet your data processing needs. Whether you're using AWS, Azure, or Google Cloud, utilizing automation and ensuring security are key to maintaining a reliable data pipeline.

In case of question please feel free to reach out our team!